borderless farming

imagining integrated farming ecosystems

last week, i joined Natasha from Theory Gang to participate in a world-building project for a contest. in this contest, we are to imagine how the world would be organized in 2045, after artificial general intelligence (AGI) has been around for 5 years.

to be fair, for my readers here, the project is neoliberal flavored as we are to imagine these solutions put in place by FAANG (big tech) corporations — which, if you know me, i hate the idea of it. but for the sake of envisioning a world based on what is given, it’s not too hard to imagine these companies somehow surviving through all that is yet to come. still, the project got me excited because i love thinking about system-wide solutions.

during this discussion last Saturnday, i shared some of what i have learned about Web3 and decided to speculate on the agricultural supply chain solution using that knowledge. the question i am writing about is, “What is a major problem that AI has solved in your world, and how did it do so?”

here i won’t share my answer (i will make it available after we submit the project), but i wish to offer some insight into Web3 integration and how we as people can make use of this technology to take control of our lives, especially as it applies to the food supply chain.

so first of all, what is Web3?

well, if we are to understand Web3, it makes sense to return to Web1: what is it, when was it developed?

Web1 was a set of internet protocols that became publicly available in the late 80s/early 90s, that basically allowed a user with internet access to create webpages hosted by an internet service provider (ISP) to passively consume content.

personal web pages were common, consisting mainly of static pages hosted on ISP-run web servers, or on web hosting services. these websites then were not dynamic HTML pages but rather static PHP/Python/Ruby coded pages. in other words, these Web1 protocols were “read-only” protocols for peers in a network. functionally, Web1 was a content-delivery network: content exists and an user consumes them.

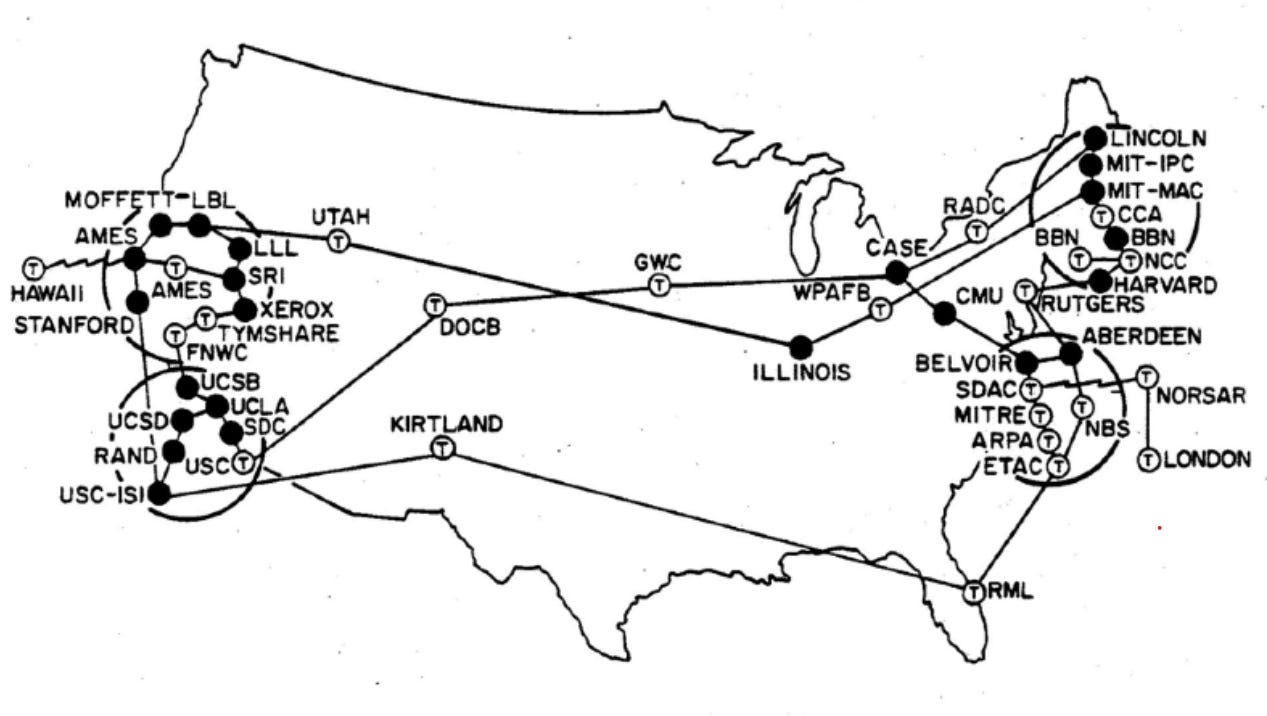

before we proceed any further, let’s look into this a little more carefully: BBC established internet access via Brunel University in 1989. how? because after two decades of research, the network science was ready to handle the public. still, how was Brunel University even granted internet access? this was thanks to ARPANET:

ARPANET or Advanced Research Projects Agency Network was the first wide-area packed switched network with distributed control (e.g. academic institutions, military organizations, certain elements in the private sector, including for commercial use with access mediated by corporate entities), which became the technical foundation of the internet. it was established by the Advanced Research Projects Agency (ARPA) and the DoD in 1969.

yes that’s right, the DoD funded research for what became the backbone of the internet.

so in 1990 ARPANET is discontinued because it was now all going public: the “net” became distributed.

by 1992, Sprint in the US and Pipex in the UK began to offer commercially available internet. other early connecting countries included Argentina, Brazil, Chile, Greece, India, Ireland, Norway, South Korea, Spain, and Switzerland.

so since a public citizen could “go online” as early as 1992, this means that there was already in existence infrastructure supporting inter-net capabilities — indeed, these were the telephone line structures which began to emerge in 1870s, which is why during this period, humans connected to the internet using dial-up.

basically, a dial-up connection uses a computer to leverage the public switched telephone network for peer-to-peer communication: your computer uses the phone line to call the ISP with the modem, which encodes audio signal into data that can be sent to another modem, which then decodes the data received using the computer.

a person could pay a high phone bill to host their own website (users were charged for time — that’s why LAN houses were popular in the early 2000s and why their service was that you paid for time to access the internet: because they too were charged by the time).

already you can see that unless you are someone with programming knowledge, i.e. you’re a college student in the late 80s/early 90s, an academic, a hobbyist, a military engineer, or a privately-funded engineer (using “engineer” to represent computer scientist, mathematician, internet technician, etc), you would not know what to do other than consume content.

over time, as more people hosted websites and more people joined the internet cyberspace, and more people learned to program, limitations to that kind of structure became apparent, and naturally people began developing solutions and innovating.

the next phase shift was marked by what is called the Web 2.0 Revolution (Web2).

what changes did Web2 introduce to Web1? whereas Web1 was read-only, Web2 is read-write.

going from “read-only” to “read-write” may seem like a trivial change but the effects were significant: these new protocols centered user-generated content, usability, and interoperability for end-users (not the backend, which is where Web3 will come in), creating a participative social network (more generally, a complex adaptive system).

the technical tools that facilitated this different design of websites centered on user-generated content came about with the combination of AJAX with HTML, ActionScript, and RSS.

around this time, i remember a lot of flash games came out, hosted on various websites. as a kid still in Brazil, i used to love playing games on US websites and on cartoonnetwork.com.br around 2004 — there were even cartoon marathons on the TV channel for Cartoon Network which would give you codes to access exclusive games on the website: it was interactive like that already early on, plugging the user to the TV and the internet — thus began our becoming-cyborg.

this simple extension of a website design, from static to dynamic content, is what allowed the planetary cybernetic culture of today to be what it is, in all its variations and similarities across all the regions which have internet access. it effectively opened space for creating online tools and platforms (software-as-a-service or SaaS) where people shared their perspectives, opinions, thoughts and experiences, through:

podcasting

blogging

tagging (folksonomy)

curating with RSS (feeds)

social bookmarking

social networking

social media

web content voting (likes, dislikes)

a bit of historical context here: perhaps because of the Twin Tower operation of 2001, perhaps not, but early on the US strategists began to send their security teams into the modern cyberspace — in fact they were already there, at the gates and watchtowers observing us. they already had technical knowledge so once the internet went public, the game became all about data processing and strategizing in this new terrain: data collected from the public internet use was analyzed and modeled — remember, this internet is being provided as a service by the DoD, so the powers that be are the ones who control the backend, they have all the network data and user meta-data to play with.

clearly, law and order would be difficult to maintain in such a dynamic space, so strategists had to adapt to new conditions: how do you maintain control in a domain where people could communicate across large physical distances at near instantaneous speeds, and possibly organize a united front?

it would be too obvious to simply restrict access as it would accelerate precisely what you wish to avoid with such restrictions: disorder. the other option is to simply modulate variance: if you control the trend of opinions, everything else will be based on what you narrate, with the benefit of creating the illusion of free speech. it is no wonder the US strategists decided to seriously invest in cybersecurity, proliferating new fields of inquiry for the sake of maintaining cybercontrol and therefore social control.

it’s worth pointing out that facebook began to be developed in 2003, and by 2004 it launched, becoming today the fourth most used web page on the planet averaging 2.8 billion monthly users (this is a lot of user data/metadata). by 2005, the C!A developed its own version of facebook and wikipedia called Intellipedia, which collates information from 18 US intelligence agencies, still in use today.

then 4 years later, suddenly, like a bifurcation in the phase space of western cultural development, a whitepaper is released in 2008 under a MIT public license, outlining a trustless electronic cash system that is fully peer-to-peer with no need for a trusted third-party: thus Bitcoin enters the scene.

parenthetically, i find it… most curious that one of the people involved with this project, according to Satoshi, the unknown author of this whitepaper, is Gavin Bell (Bell Labs anyone?) who later changed his name to Gavin Andresen (Mr. Anderson, is that you?).

anyway, this event marks the beginning of what is referred today as the Web3 Revolution — keep in mind, this technological “revolution” is not complete, which is to say, there hasn’t been mass adoption yet, protocols are not fully developed and integrated: when you are paying for bread with cryptocurrencies, that’s when we would have crossed that boundary.

the essential new feature of Web3 is that, along with read and write now users can “save.”

that is, by using web3.js and similar libraries to create SaaS and products such as games, programmers can create networks of blockchains on which users/actors may save information publicly, according to some protocol of verification (e.g. proof-of-work or proof-of-stake or whatever else is to come), so others may interact with that information directly (whether it be a signature on a smart contract or currency ownership), instead of using a specific corporation’s API to access limited data.

that’s the “small” shift: read-write-save.

but remember: just as the internet was developed by the military and later introduced to the public, it is entirely possible that the “save” part introduced by the Bitcoin whitepaper came from the military/government side. whatever the case, it remains that this technology, if only used within the parameters afforded by today’s capitalism i.e. to make some fuckers rich, then we are fucked, for the venture capitalist’s control over the conditions that parametrize our lives would become even more automated and streamlined; in short, their rule would become visibly, brutally authoritarian.

before we proceed, a couple key characteristics of the Web3 umbrella that should be noted for precision agriculture:

it involves a semantic web: this will become the most important area of research e.g. natural language processing along with OWL 2 (which started as early as 2004), because through the adoption and use of Web3 protocols, the internet as it is will be transformed in a way where data is not saved privately in servers but rather is distributed, shared; services would show different views for the same data

it involves the use of artificial intelligence applied to data gathered from blockchains and users. check out SingularityNET for an example of AI marketplace — there you may buy an AI algorithm designed for a specific task. we are approaching the developmental stage where we would have self-learning AI entities occupying these data-spaces processing information constantly uploaded to this semantic web. once something like that exists, artificial general intelligence (AGI) wouldn’t be too far off, and once that happens, it would quickly become artificial super intelligence (ASI). so long as that’s the general technological trend, we humans have to be prepared for that transition because it could be bad — of course, assuming we ever get to that point, this is all speculation

so when discussing this, i’m fully aware of the negative impacts — yet, just as i can imagine the internet as it exists now being useful precisely because it is a tool of war, i can also imagine a Web3 backend being useful.

so here i’m going to speculate on how these technological tools can be used for our benefit based on what is currently available.

i will leave the discussion of Web3 proper for another essay, in which i will discuss how the cryptography works, how these smart contract function and how smart contracts are written, why gas/transaction fees exist, examples of what is already being done with this web3.js library, and so on.

if a lot of what follows doesn’t make much sense, i will provide the background later on, so just go with me for now, it should be easy to follow.

i begin the analysis by considering the agricultural supply chain:

this isn’t meant to be a faithful representation of the supply chain, but it’s a decent enough sketch: input supply goes into producer; produced output is transported to warehouse and processing facilities; these nodes send processed goods to wholesalers (Mandi is Asia's largest wholesale market for fruits and vegetables); wholesalers distribute to retail/supermarket/grocery stores and institutions/restaurants/fast food; finally reaching consumers.

input supply includes fertilizer (which is going to take a hit because Russia provides a lot of fertilizer to the west and they’ve restricted export), agrochemicals, even seeds now.

the arrows (edges/links) connecting nodes here represent transportation systems used (trucks, planes, ships) — of course, multiple systems might be employed for the transportation of a single commodity or for the transportation of multiple commodities.

affecting producers are factors such as significant weather variations (droughts, floods), pests, diseases to name some.

although many different foods use similar farming systems, some might require different elements altogether (e.g. a drying process, different soil). as farmers continue to implement technology with their operation, the result is a variety of discrete solutions that don’t communicate with each other, producing siloed data e.g. the soil sampler might not share data with the irrigation system, and the weather system doesn’t communicate with irrigation so it might irrigate right before a rain.

on this very node (the production node), i carry out an analysis to develop farming systems that would communicate “efficiently” (to be defined) between different subsystems. for example, rice and lettuce don’t require the same amount of water, so if you grow these two, the irrigation system for your whole operation would have to be composed of independent subsystems which are triggered (turned on/off) at different time intervals, manually or automated. the idea, of course, is to automate most of these, so i proceed accordingly.

another major problem that ends with the consumer but begins with producers, is wastefulness due to inefficiencies downstream the supply chain. as a result, less available food is sold/consumed than produced/output — but don’t get me twisted, this isn’t about maximizing profit, this is about maximizing output to feed people.

according to a 2019 UN report, the current population of 7.7 billion will grow by another 2 billion in the next 30 years to reach 9.7 billion people by 2050. as this happens, the UN Food and Agriculture Organization expects that people are likely to consume 30% more calories on average by that time as they can afford more varied and protein-rich diets.

the point is that should these estimates prove correct, in the following three decades, people will need to produce up to 70% more food to keep up with demand.

yet currently, on its way from farm to fork, 40% of food in America gets spoiled and binned.

so to begin, i have to consider transportation and production.

i zoom in on the production node: how would global environmental factors cause local challenges for farmers around the planet?

rising temperature affects crop production by drying the soil, affecting soil quality (nutrient deficiencies)

higher CO2 levels affect crop yields, leading to decreased quality due to decreased levels of protein and nitrogen; worth pointing out that drops in grain and forage quality will also impact livestock

extreme weather conditions like natural disasters and hydrologic events like floods and droughts harm and destroy crops

increasing population leads to increased demand (though remember, we produce enough food to feed 10 billion as inefficient as it is, it really is how we distribute and manage our resources that’s the problem i.e. it is capitalism that is the problem — crony-capitalism, whatever-capitalism, it’s all Capitalism, loves, this is literally what Capitalism affords in theory and practice

urbanization is impacting global food demand since they have specific preferences — this plethora of demands for all sorts of processed foods, vegan or not, can and should be eliminated or minimized with proper food education

summarily, by 2050:

34% population increase (but not if Gates has something to say about it)

9.7 billion people to feed

50% population living in cities

40% decrease in food production due to environmental changes

30% decrease in farmland

4 Celsius average temperature increase

50% increase in areas with water shortages

so clearly farmers need to grow more efficiently while considering quantity due to increasing population (Malthusians and Grantians, i will fight every single one of you every samsara) and quality loss due to environmental factors.

consequently, because of this and limited farmland, farmers have to basically solve an optimization problem to grow more with less. the practical solution, of course, is adopting sustainable agricultural practices — observing and listening to the land.

here i wish to think of a system that is at least robust and at best, antifragile. that is, i want a system that can remain relatively stable through small variations, or that becomes better/stronger from these small variations. these small variations are the day-to-day weather patterns, pests, and diseases it fights — errors carry information.

one focus that capitalists have when discussing these projects is scalability. i think that is important but their emphasis on it is simply due to their profit-chasing, they’re capitalists after all.

instead, we should develop models that easily scale up to a comfortable limit and distribute them, instead of trying to scale the solution to a continental or global level right away. i think if you have distributed but fully integrated small farming ecosystems, they will canonically connect with one another and become planetary continental and planetary.

i take this approach because if a system is fragile (which our agricultural supply chain is) then to make it less fragile i.e. more robust and antifragile, we have to introduce redundancies: redundancies decrease fragility.

the redundancy here is achieved by creating wholly integrated farming ecosystems (modular farms) that can be applied from the household to the multi-acre wheat farm. once in place, these can in turn connect with one another via the internet.

why redundancy? layers of redundancy are the central risk management property of natural systems. we humans have two kidneys, extra spare parts, and extra capacity in many, many things (lungs, neural system, arterial apparatus), while human designed systems tends to be spare and inversely redundant — we have a historical track record of engaging in debt, which is the opposite of redundancy ($50k in extra cash in the bank or, better, under the mattress, is redundancy; owing the bank an equivalent amount, that is, debt, is the opposite of redundancy).

redundancy is not necessarily passive — it can be extremely aggressive. for instance, if you have extra inventory of, say, fertilizers in the warehouse, just to be safe, and there happens to be a shortage because of disruptions in… Russia, you can sell the excess inventory at a huge premium. or if you have extra oil reserves, you may sell them at a large profit during a squeeze… kkkkkkkkkkkkk.

in fact, this kind of redundancy as overcompensation is found in natural systems e.g. hormetic response — if you take a little bit of poison over a period of time, it will strengthen your immunity to a higher dosage (in technical terms, hormesis refers to adaptive responses of biological systems to moderate environmental or self-imposed challenges through which the system improves its functionality and/or tolerance to more severe challenges).

a similar response of redundancy as overcompensation is observed in the body during lifting: if you bench press 100 kg once, your body will not prepare you to press 100 kg again or even 101 kg but will prepare you to lift 110 kg.

of course as we can imagine, a process or thing is antifragile only up to a certain point — your body will not gain from the disorder brought about by jumping from the 7th floor.

still, we should look to this hormetic response system to build our agricultural supply chain, which is where tools such as drones and blockchain and AI would come in — but we have to have control or ownership over the design or use of these systems.

the issue of governance and ownership is where blockchain comes in. as i said, i will explain details in a separate post so trust me on this one for now bro, but because information will be recorded on a distributed ledger (so it’s public), information recorded about our foods at every stage of the agricultural supply chain would be available and could be used to monitor storage conditions and ensure quality.

moreover, self-executing smart insurance contracts built on distributed ledger technology would provide better insurance for all actors in the supply chain because smart contracts would eliminate the human factor from assessing insurance claims, making the claim process simpler, faster, and more transparent.

this is an important point because i found out that counterfeit seeds are a huge problem in the Global South: for example, 20% of seeds sold in Africa are fake (note this report was made for the Bill and Melinda Gates Foundation in collaboration with the Monitor Deloitte consulting agency — sus, i know), which results in unfavorable food quality.

smart contracts would also minimize the risks of false claims and corruption among insurance providers, seed and agrochemical suppliers, as no actor would be able to alter insurance policies once they’re digitally signed and on-chain. this is connects to what i have written regarding how this technology would eliminate the human factor in certain parts of a system, at which point corporate rule would become even harsher — of course in certain situations this automation might be beneficial for the whole subsystem like agriculture, but it won’t be so for every subsystem i.e. a housing system with automated eviction, as there would be no human leniency since all agreements are coded on a smart contract, alerting the police and judicial system right away.

anyway, here’s a summary of ways blockchain could improve the agricultural supply chain:

improved quality of products and fewer diseases originated from food

fair pricing through the whole supply/value chain for all actors

financing and insurance for small farmers (decentralized loaning protocols already exist, like Compound.finance or Aave or Curve)

facilitation of financial transactions by decreasing fees and not depending on third-party like banks or visa/mastercard

traceability throughout the supply/value chain

more informed consumer purchasing decisions

transparent transactions and elimination of fraud

data accessibility

in other words, supply/value chain blockchain services can mitigate the risk of counterfeits and increase the efficiency of blockchain-based agriculture by providing transparency and eliminating unnecessary third parties. in addition, by reducing uncertainty and enabling trust among actors, distributed ledgers and smart contracts provide opportunity for more inclusive market participation among micro (household), small, and medium enterprises, distributing access to sustainable and efficient food growing to all.

having this technology would immediately address the basic challenges imposed by transportation. while transaction data can be traced by the cryptographic fingerprint attached to each transaction, the movement of a physical product along a supply chain from farm to consumer requires a much more immutable product–process link, which a blockchain affords because once verified on-chain, the data cannot be edited. available to use we have:

tracking using quick-response (QR) codes on the product’s packaging (QR codes in agriculture are already used but still require regulators to monitor authenticity from a seed to a ready product)

advanced radio frequency identification (RFID) chips and RFID supply chains for agricultural products

near-field communication (NFC) agriculture technology for contactless reading of product data from RFID

crypto-anchor technology for agriculture (IBM recently developed cryptographic anchors to ensure product authenticity for distributed ledger technologies)

all of this can be used in combination with other “solutions” creating an ecosystem of devices that communicate with each other, sending real-time information like alerts to farmers.

there are plenty of technologies available that can be combined to create a sustainable farming ecosystem: drones; satellites connecting GIS tools and GPS tracking (location intelligence); IoT sensors; automated steering and guidance with navigation and mapping systems; ISOBUS; robotics systems, including swarm technologies and drone guidance; blockchain and distributed ledger technologies; route optimization for heavy machinery; machine learning (ML) and AI (like image recognition).

i sketch a possible path:

data is collected from IoT sensors, machinery, satellites, and weather stations

capture field images from drones and satellites

create precise maps

analyze soil moisture and measure soil parameters

predict weather and project crop yields

minimize use of resources: land, fertilizer, water

precisely plan irrigation, fertilization, and harvesting

create measurable plans for production to meet projected food demand

data is structured and analyzed using historical (weather) data and AI or ML

use predictions and data as inputs for automated actions

train automated and self-learning AI systems

use robotics for seeding and harvesting (on a small/medium/large scale — you could still manually labor at home)

use drones for fertilizing fields

precisely deliver water to different crops, reducing water consumption

automate climate control for indoor farms

automate guidance and steering for autonomous tractors and harvesters

automate transportation of heavy agricultural machinery to fields and other farmers

collect data from equipment and transfer it to data platforms

automate reporting for analytics and future planning based on output data, becoming the new input (forming a feedback)

all of this would improve decision-making and increase farm efficiency: increased quality and quantity of yields, timely transportation, data transparency and accessibility about our food to better inform us, effective water use, effective land distribution and use.

the imagined system above would be antifragile on its own because it would be learning from small errors day-to-day — but antifragility is a spectrum, so to make it even more antifragile, i would distribute ready-made modular farming systems, with ready-to-assemble modules (yes, we would have to make use of existing markets to produce these modules) included there would be a small drone for aerial mapping, various sensors for soil quality and temperature, air quality and temperature, etc, free access to farming dashboard that facilitates the intervention process by providing easy-to interpret data, analytics, etc.

a unified ecosystem would combine all these solutions to collect data from a blockchain and process data in the cloud. these analytics would provide actionable inputs for agricultural equipment, which is itself adjusted with self-learning AI systems. by matching historical data, including data on previous harvests and weather conditions, with data from maps and aerial images as well as the current weather, machinery would automatically start harvesting areas where crops are ready — while that happened, other fields would get the required irrigation and fertilization from automated robots and drones.

with this implemented ecosystem of technologies, a small/medium/large scale farmer wouldn’t need to wake up at 4 or 5 am. instead, the farm would work automatically as analytics from the fields and connected machinery are shown on user-friendly dashboards to help farmers make decisions based on relevant data.

this is of course just the technological aspect, which has to be complemented by education. i haven’t looked into this yet, but to achieve this level of technological integration with farming, the education system training future farmers should include the use of predictive farming technologies, sustainable practices, regenerative farming methods, and permaculture design.

the combination of land organization based on regenerative farming and permaculture principles, AGI, and distributed ledger technologies that seamlessly integrates modular farms for individual/shared households, with free access to aggregate data from all farming operations which your farming module would connect to, is the ideal state which would transform our currently fragile agricultural supply chain into an antifragile system.

if this is a desirable state of affairs (sans the implied corporate ownership, of course), what steps would we take to proceed in that direction?

more on this to come.

Idk why I didn't see this before... but you disappeared after this... wth rafa? LOL